By Samir Brahimi, Intelligence Manager at Riskline

While the conflict between the United States (US), Israel and Iran rages on, a parallel war is being fought online, where propaganda, disinformation and AI-generated content make it more difficult to know what is actually happening.

Information warfare has always been a core component of real-world conflict. However, the ongoing war in the Middle East has seen a proliferation of misrepresented or fake content, exacerbated by the use of AI tools. Iranian ballistic missiles sinking the USS Abraham Lincoln, videos of crying US soldiers, the Burj Khalifa on fire, images of Ayatollah Ali Khamenei’s body buried among the rubble of his home, and claims that some Gulf states had attacked Iran make up a small portion of the content that has been shared widely but is entirely fake.

The sources and scale of disinformation

Disinformation comes from all directions. State media, pro-Iran accounts, pro-Israel accounts and profit-seeking engagement farmers all contribute to the pollution of the information space, blurring the line between fact and fiction. Purely AI-generated images and videos, AI-manipulated content, recycled real footage of past conflicts and video game footage have all been circulated online with misleading captions. It is becoming increasingly difficult to distinguish AI-generated content from real content, especially in the gap between news breaking and footage being verified. Some fake content online is spread unintentionally, including by people in positions of power, with a large number of followers or from “verified” accounts. However, much of it is linked to state-aligned influence operations, often involving dozens of coordinated social media accounts, timed around real attacks to maximise confusion and emotional impact.

Platform dynamics and algorithmic amplification

The situation is compounded by social media platforms themselves. Fake content on one platform is quickly spread across multiple platforms and seen by millions of people before being fact-checked. Algorithms prioritise engagement over accuracy, meaning that false and sensationalist stories are amplified. The use of bots and automated accounts artificially boosts content, making it appear more popular or credible than it actually is. And social media companies have either been slow or not committed to moderating content and removing misinformation. The result is an information environment that is increasingly challenging to navigate – where real verified information is hidden among massive amounts of noise.

Cutting through the noise with verified intelligence

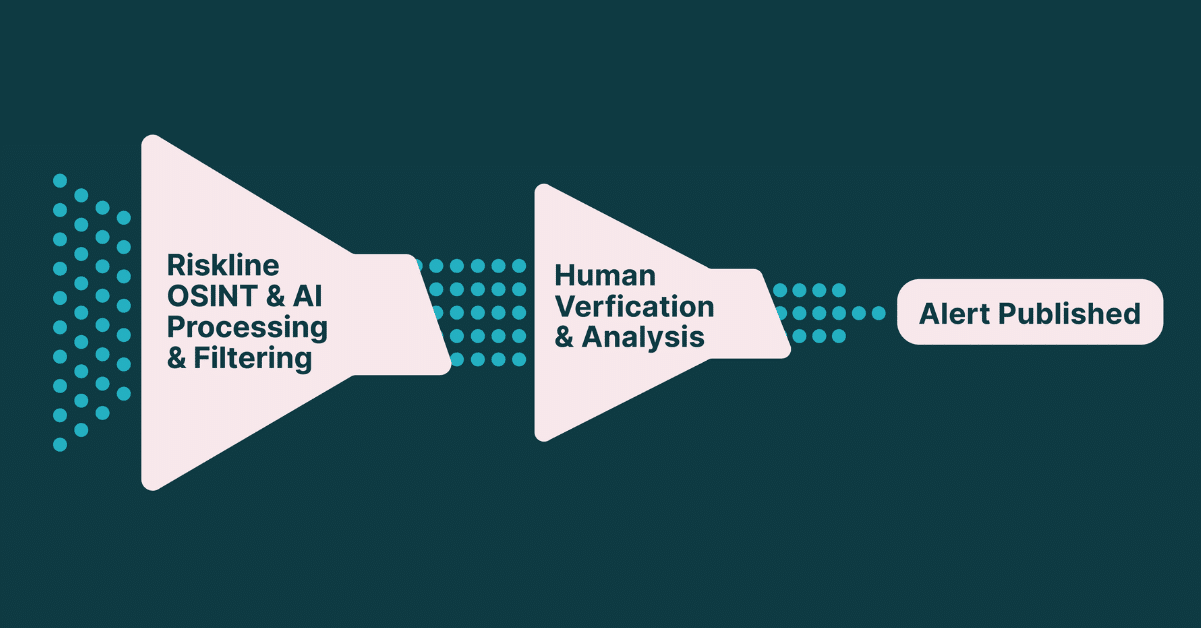

Riskline’s proprietary platform draws on thousands of sources — official primary sources, trusted international and local media, and social media feeds — while actively blocking known producers and spreaders of false or misleading information. Sources are cross-referenced. Our analysts apply OSINT methodologies to cut through the noise and deliver verified, actionable intelligence.

In the month before the US and Israel attacked Iran, our sources captured thousands of Iran-related messages, but only 13% met our relevance threshold. The rest was background noise around nuclear talks and the US military build-up in the region. When the conflict began on 28 February, that changed. Total message volumes surged, but so did the quality of the signal: relevance jumped to 59%, nearly five times the pre-war rate. Our systems were already tracking developments in the Middle East — and when the conflict began, they continued to filter the noise and surface what mattered.

The information war in the Middle East demonstrates what has become the new normal during times of crisis. As AI continues to make it easier to create and spread false information, the volume and sophistication of disinformation will also increase. Having access to verified intelligence in this type of online environment therefore becomes a prerequisite for managing risk to safety, operations and reputation.